User Codes

Astrophysics

Athena (explicit, uniform grid MHD code)

Athena is a straightforward C code which doesn't use a lot of libraries so it is pretty straightforward to build and compile on new machines.

It encapsulates its compiler flags, etc in an Makeoptions.in file which is then processed by configure. I've used the following additions to Makeoptions.in on TCS and GPC:

<source lang="sh"> ifeq ($(MACHINE),scinettcs)

CC = mpcc_r LDR = mpcc_r OPT = -O5 -q64 -qarch=pwr6 -qtune=pwr6 -qcache=auto -qlargepage -qstrict MPIINC = MPILIB = CFLAGS = $(OPT) LIB = -ldl -lm

else ifeq ($(MACHINE),scinetgpc)

CC = mpicc LDR = mpicc OPT = -O3 MPIINC = MPILIB = CFLAGS = $(OPT) LIB = -lm

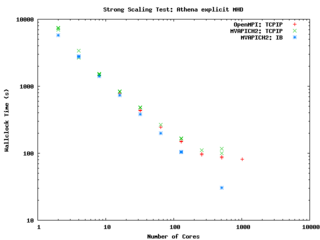

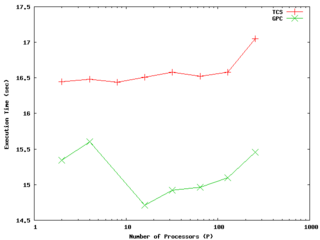

else ... endif endif </source> It performs quite well on the GPC, scaling extremely well even on a strong scaling test out to about 256 cores (32 nodes) on Gigabit ethernet, and performing beautifully on InfiniBand out to 512 cores (64 nodes).

-- ljdursi 19:20, 13 August 2009 (UTC)

FLASH3 (Adaptive Mesh reactive hydrodynamics; explict hydro/MHD)

FLASH encapsulates its machine-dependant information in the FLASH3/sites directory. For the GPC, you'll have to

module load intel module load openmpi module load hdf5/183-v16-openmpi

and with that, the following file (sites/scinetgpc/Makefile.h) works for me: <source lang="sh">

- Must do module load hdf5/183-v16-openmpi

HDF5_PATH = ${SCINET_HDF5_BASE} ZLIB_PATH = /usr/local

- ----------------------------------------------------------------------------

- Compiler and linker commands

- We use the f90 compiler as the linker, so some C libraries may explicitly

- need to be added into the link line.

- ----------------------------------------------------------------------------

- modules will put the right mpi in our path

FCOMP = mpif77 CCOMP = mpicc CPPCOMP = mpiCC LINK = mpif77

- ----------------------------------------------------------------------------

- Compilation flags

- Three sets of compilation/linking flags are defined: one for optimized

- code, one for testing, and one for debugging. The default is to use the

- _OPT version. Specifying -debug to setup will pick the _DEBUG version,

- these should enable bounds checking. Specifying -test is used for

- flash_test, and is set for quick code generation, and (sometimes)

- profiling. The Makefile generated by setup will assign the generic token

- (ex. FFLAGS) to the proper set of flags (ex. FFLAGS_OPT).

- ----------------------------------------------------------------------------

FFLAGS_OPT = -c -r8 -i4 -O3 -xSSE4.2 FFLAGS_DEBUG = -c -g -r8 -i4 -O0 FFLAGS_TEST = -c -r8 -i4

- if we are using HDF5, we need to specify the path to the include files

CFLAGS_HDF5 = -I${HDF5_PATH}/include

CFLAGS_OPT = -c -O3 -xSSE4.2 CFLAGS_TEST = -c -O2 CFLAGS_DEBUG = -c -g

MDEFS =

.SUFFIXES: .o .c .f .F .h .fh .F90 .f90

- ----------------------------------------------------------------------------

- Linker flags

- There is a seperate version of the linker flags for each of the _OPT,

- _DEBUG, and _TEST cases.

- ----------------------------------------------------------------------------

LFLAGS_OPT = -o LFLAGS_TEST = -o LFLAGS_DEBUG = -g -o

MACHOBJ =

MV = mv -f

AR = ar -r

RM = rm -f

CD = cd

RL = ranlib

ECHO = echo

</source>

-- ljdursi 22:11, 13 August 2009 (UTC)

Aeronautics

Chemistry

GAMESS (US)

The GAMESS version January 12, 2009 R3 was built using the Intel v11.1 compilers and v3.2.2 MPI library, according to the instructions in http://software.intel.com/en-us/articles/building-gamess-with-intel-compilers-intel-mkl-and-intel-mpi-on-linux/

The required build scripts - comp, compall, lked - and run script - rungms - were modified to account for our own installation. In order to build GAMESS one first must ensure that the intel and intelmpi modules are loaded ("module load intel intelmpi"). This applies to running GAMESS as well. The module "gamess" must also be loaded in order to run GAMESS ("module load gamess").

The modified scripts are in the file /scinet/gpc/src/gamess-on-scinet.tar.gz

Running GAMESS

- Make sure the directory /scratch/$USER/gamess-scratch exists (the $SCINET_RUNGMS script will create it if it does not exist)

- Make sure the modules: intel, intelmpi, gamess are loaded (in your .bashrc: "module load intel intelmpi gamess").

- Create a torque script to run GAMESS. Here is an example:

- The GAMESS executable is in $SCINET_GAMESS_HOME/gamess.00.x - The rungms script is in $SCINET_GAMESS_HOME/rungms (actually it is $SCINET_RUNGMS)

- For IB multinode runs, use the $SCINET_RUNGMS_IB script

- The rungms script takes 4 arguments: input file, executable number, number of compute processes, processors per node

For example, in order to run with the input file /scratch/$USER/gamesstest01, on 8 cpus, and the default version (00) of the executable on a machine with 8 cores:

# load the gamess module in .bashrc module load gamess

# run the program $SCINET_RUNGMS /scratch/$USER/gamesstest01 00 8 8

Here is a sample torque script for running a GAMESS calculation, on a single 8-core node:

<source lang="bash">

- !/bin/bash

- PBS -l nodes=1:ppn=8,walltime=48:00:00,os=centos53computeA

- PBS -N gamessjob

- To submit type: qsub gms.sh

- If not an interactive job (i.e. -I), then cd into the directory where

- I typed qsub.

if [ "$PBS_ENVIRONMENT" != "PBS_INTERACTIVE" ]; then

if [ -n "$PBS_O_WORKDIR" ]; then

cd $PBS_O_WORKDIR

fi

fi

- the input file is typically named something like "gamesjob.inp"

- so the script will be run like "$SCINET_RUNGMS gamessjob 00 8 8"

- load the gamess module if not in .bashrc already

- actually, it MUST be in .bashrc

- module load gamess

- run the program

$SCINET_RUNGMS gamessjob 00 8 8 </source>

Here is a similar script, but this one uses 2 InfiniBand-connected nodes, and runs the appropriate $SCINET_RUNGMS_IB script to actually run the job:

<source lang="bash">

- !/bin/bash

- PBS -l nodes=2:ib:ppn=8,walltime=48:00:00,os=centos53computeA

- PBS -N gamessjob

- To submit type: qsub gmsib.sh

- If not an interactive job (i.e. -I), then cd into the directory where

- I typed qsub.

if [ "$PBS_ENVIRONMENT" != "PBS_INTERACTIVE" ]; then

if [ -n "$PBS_O_WORKDIR" ]; then

cd $PBS_O_WORKDIR

fi

fi

- the input file is typically named something like "gamesjob.inp"

- so the script will be run like "$SCINET_RUNGMS gamessjob 00 8 8"

- load the gamess module if not in .bashrc already

- actually, it MUST be in .bashrc

- module load gamess

- This script requests InfiniBand-connected nodes (:ib above)

- so it must run with the IB version of the rungms script,

- $SCINET_RUNGMS_IB

$SCINET_RUNGMS_IB gamessjob 00 16 8 </source>

-- dgruner 5 October 2009

Tips from the Fekl Lab

Through trial and error, we have found a few useful things that we would like to share:

1. Two very useful, open-source programs for visualization of output files from Gamess and for generation of input files are MacMolPltand Avogadro. The are available for UNIX/LINUX, Windows and Mac based machines, HOWEVER: any input files that we have generated with these programs on a Windows-based machine do not run. We don't know why.

2. WinSCP is a very useful tool that has a graphical user interface for moving files from a local machine to SCINET. It also has text editing capabilities.

Hope this helps.

-- mzd 7 October 2009

Climate Modelling

Medicine/Bio

High Energy Physics

Structural Biology

Molecular simulation of proteins, lipids, carbohydrates, and other biologically relevant molecules.

Molecular Dynamics (MD) simulation

GROMACS

Please refer to the GROMACS page

NAMD

NAMD is one of the better scaling MD packages out there. With sufficiently large systems, it is able to scale to hundreds or thousands of cores on Scinet. Below are details for compiling and running NAMD on Scinet.

More information regarding performance and different compile options coming soon...

Compiling NAMD for GPC

Ensure the proper compiler/mpi modules are loaded. <source lang="sh"> module load intel module load openmpi/1.3.3-intel-v11.0-ofed </source>

Compile Charm++ and NAMD <source lang="sh">

- Unpack source files and get required support libraries

tar -xzf NAMD_2.7b1_Source.tar.gz cd NAMD_2.7b1_Source tar -xf charm-6.1.tar wget http://www.ks.uiuc.edu/Research/namd/libraries/fftw-linux-x86_64.tar.gz wget http://www.ks.uiuc.edu/Research/namd/libraries/tcl-linux-x86_64.tar.gz tar -xzf fftw-linux-x86_64.tar.gz; mv linux-x86_64 fftw tar -xzf tcl-linux-x86_64.tar.gz; mv linux-x86_64 tcl

- Compile Charm++

cd charm-6.1 ./build charm++ mpi-linux-x86_64 icc --basedir /scinet/gpc/mpi/openmpi/1.3.3-intel-v11.0-ofed/ --no-shared -O -DCMK_OPTIMIZE=1 cd ..

- Compile NAMD.

- Edit arch/Linux-x86_64-icc.arch and add "-lmpi" to the end of the CXXOPTS and COPTS line.

- Make a builds directory if you want different versions of NAMD compiled at the same time.

mkdir builds ./config builds/Linux-x86_64-icc --charm-arch mpi-linux-x86_64-icc cd builds/Linux-x86_64-icc/ make -j4 namd2 # Adjust value of j as desired to specify number of simultaneous make targets. </source> --Cmadill 16:18, 27 August 2009 (UTC)

Running Fortran

On the development nodes, there is an old gcc. The associated libraries are not on the compute nodes. Ensure the line:

module load gcc

is in your .bashrc file.