BGQ

| Blue Gene/Q (BGQ) | |

|---|---|

| Installed | August 2012 |

| Operating System | RH6.2, CNK (Linux) |

| Number of Nodes | 2048(32,768 cores), 512 (8,192 cores) |

| Interconnect | 5D Torus (jobs), QDR Infiniband (I/O) |

| Ram/Node | 16 Gb |

| Cores/Node | 16 (64 threads) |

| Login/Devel Node | bgq01,bgq02 |

| Vendor Compilers | bgxlc, bgxlf |

| Queue Submission | Loadleveler |

Specifications

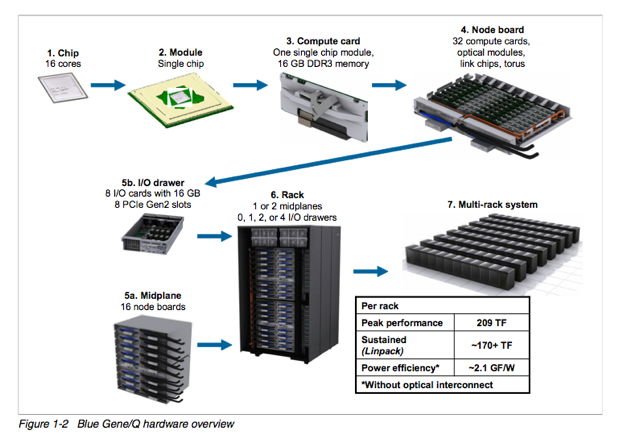

BGQ is an extremely dense and energy efficient 3rd generation IBM Supercomputer built around a system on a chip compute node that has a 16core 1.6GHz PowerPC based CPU (PowerPC A2) with 16GB of Ram and runs a very lightweight Linux OS called CNK. The nodes (with 16 core apiece) are bundled in groups of 32 into node boards and then 16 of these boards make up a midplane with 2 midplanes per rack, or 16,348 cores and 16 TB of RAM per rack. The compute nodes are all connected together using a custom 5D torus highspeed interconnect. Each rack has 16 I/O nodes that run a full Redhat Linux OS that manages the compute nodes and mounts the GPFS filesystem. SciNet has 2 BGQ systems, a half rack 8192 core development system, and a 2 rack 32,768 core production system.

5D Torus Network

The network topology of Blue/Gene Q is a five-dimensional (5D) torus, with direct links between the nearest neighbors in the ±A, ±B, ±C, ±D, and ±E directions. As such there are only a few optimum block sizes that will use the network efficiently.

| Node Boards | Compute Nodes | Cores | Torus Dimensions |

| 1 | 32 | 512 | 2x2x2x2x2 |

| 2 (adjacent pairs) | 64 | 1024 | 2x2x4x2x2 |

| 4 (quadrants) | 128 | 2048 | 2x2x4x4x2 |

| 8 (halves) | 256 | 4096 | 4x2x4x4x2 |

| 16 (midplane) | 512 | 8192 | 4x4x4x4x2 |

| 32 (1 rack) | 1024 | 16384 | 4x4x4x8x2 |

| 64 (2 racks) | 2048 | 32768 | 4x4x8x8x2 |

Devel Nodes

The development nodes for the BGQ are bgq01 for the half-rack development system and bgq02 for the 2-rack production system. They are IBM Power7 nodes which serve as compilation and submission hosts for the BGQ. Programs are cross-compiled for the BGQ on the Power7 nodes and then submitted to the queue using loadleveler.

Compilers

The BGQ uses IBM XL compilers to cross-compile code for the BGQ. Compilers are available for FORTRAN, C, and C++. The compilers by default produce static binaries, however with BGQ it is possible to now use dynamic libraries as well. The compilers follow the XL convections with the prefix bg, so bgxlc and bgxlf are the C and FORTRAN compilers respectively. Most users however will use the MPI variants which are shown below.

/bgsys/drivers/V1R1M1/ppc64/comm/xl/bin/mpich2version /bgsys/drivers/V1R1M1/ppc64/comm/xl/bin/mpixlc /bgsys/drivers/V1R1M1/ppc64/comm/xl/bin/mpixf90

Job Submission

As the BGQ architecture is different from the development nodes, the only way to test your program is to submit a job to the BGQ. Jobs are submitted through loadleveler using runjob which in many ways similar to mpirun or mpiexec with a few BGQ specific flags. As shown above in the network topology overview, there are only a few optimum job size configurations which is also further constrained by each block requiring a minimum of one IO node. In SciNet's configuration (with 8 I/O nodes per midplane) this allows 64 nodes (1024 cores) to be the smallest block size. Typically a block size matches the job size to offer fully dedicated resources to the job. Multiple jobs can be run within the same block, however this results in shared resources (network and IO) and are referred to as sub-block jobs.

Loadleveler

Job submission is done through loadleveler with a few blue gene specific commands. The command "bg_shape" is in number of nodes, not cores, so a bg_shape=32 would be 512 cores.

#!/bin/sh # @ job_name = bgsample # @ job_type = bluegene # @ comment = "BGQ Job By Size" # @ initialdir = /bgsys/apps/$HOME/ # @ error = $(job_name).$(Host).$(jobid).err # @ output = $(job_name).$(Host).$(jobid).out # @ executable = /bgsys/drivers/ppcfloor/hlcs/bin/runjob # @ arguments = --exe /bgsys/apps/$HOME/a.out # @ bg_size = 32 # @ wall_clock_limit = 30:00 # @ bg_connectivity = Torus # @ queue

runjob

runjob on BGQ acts a lot like mpirun/mpiexec and is the launcher to start jobs on BGQ. The "block" argument is the predefined group of nodes that are already booted. See the next section on how to create these blocks manually. Note that a block does not need to be rebooted between jobs, only if the number of nodes or network parameters are need to be changed. For this example block R00-M0-N03-64 is made up of 2 node cards with 64 compute nodes (1024 cores).

runjob --block R00-M0-N03-64 --ranks-per-node=16 --np 1024 --cwd=$PWD : $PWD/code -f file.in

also the flag

--verbose #

where # is from 1-7 is very useful it you are trying to debug an application.

To run a sub-block job (ie share a block) you need to specify a "--corner" within the block to start the job and a 5D AxBxCxDxE "--shape". The following example shows 2 jobs sharing the same block.

runjob --block R00-M0-N03-64 --corner R00-M0-N03-J00 --shape 1x1x1x2x2 --ranks-per-node=16 --np 64 --cwd=$PWD : $PWD/code -f file.in runjob --block R00-M0-N03-64 --corner R00-M0-N03-J04 --shape 2x2x2x2x1 --ranks-per-node=16 --np 256 --cwd=$PWD : $PWD/code -f file.in

To see running jobs and the status of available blocks use on the service nodes:

list_jobs list_blocks

*Manual Block Creation*

To reconfigure the BGQ nodes you can use the bg_console or the web based navigator from the service node

bg_console

There are various options to create block types (section 3.2 in the BGQ admin manual), but the smallest is created using the following command:

gen_small_block <blockid> <midplane> <cnodes> <nodeboard> gen_small_block R00-M0-N03-32 R00-M0 32 N03

The block then needs to be booted using:

allocate R00-M0-N03-32

If those resources are already booted into another block, that block must be freed before the new block can be allocated.

free R00-M0-N03

There are many other functions in bg_console:

help all

The BGQ default nomenclature for hardware is as follows:

(R)ack - (M)idplane - (N)ode board or block - (J)node - (C)ore

So R00-M01-N03-J00-C02 would correspond to the first rack, second midplane, 3rd block, 1st node, and second core.

I/O

GPFS