Accelerator Research Cluster

| Cell Development Cluster | |

|---|---|

| Installed | June 2010 |

| Operating System | Linux |

| Interconnect | Infiniband |

| Ram/Node | 32 Gb |

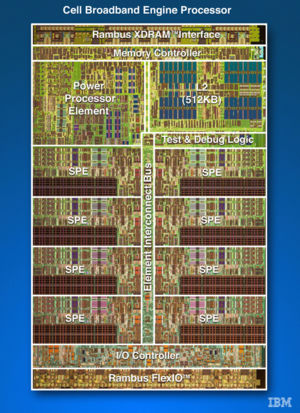

| Cores/Node | 2 PPU + 16 SPU |

| Login/Devel Node | cell-srv01 (from login.scinet) |

| Vendor Compilers | ppu-gcc, spu-gcc |

The Accelerator Research Cluster is a technology evaluation cluster with a combination of 14 IBM PowerXCell 8i "Cell" nodes and 8 Intel x86_64 "Nehalem" nodes containing NVIDIA GPUs. The QS22's each have two 3.2GHz "IBM PowerXCell 8i CPU's, where each CPU has 1 Power Processing Unit (PPU) and 8 Synergistic Processing Units (SPU), and 32GB of RAM per node. The Intel nodes have two 2.53GHz 4core Xeon X5550 CPU's with 48GB of RAM per node.

Login

First login via ssh with your scinet account at login.scinet.utoronto.ca, and from there you can proceed to cell-srv01 which is currently the gateway machine.

Compile/Devel/Compute Nodes

Cell

You can log into any of 12 nodes blade[03-14] directly to compile/test/run Cell specific or OpenCL codes.

Nehalem (x86_64)

You can log into any of 8 nodes cell-srv[01-08] directly, however they are not configured to work with the Cell blades yet.

Local Disk

This test cluster currently cannot see the global /home and /scratch space so you will have to copy (scp,etc..) your code to a separate local/home dedicated for this cluster. Also initially you will probably want to copy/modify your scinet .bashrc, .bashrc_profile, and .ssh directory onto this system.